|

I am a second-year Master's student at South China University of Technology, currently seeking algorithm engineering opportunities. My work focuses on building practical AI systems at the intersection of research and deployment. I learn quickly, adapt fast to new technical domains, and enjoy turning ideas into working systems with strong ownership and execution. I received my Bachelor's degree from the College of Software Engineering at South China Agricultural University in 2024, where I served as class president during my undergraduate studies. Goal: Build efficient, scalable, and reliable AI algorithms for real-world applications. Research Interests:

|

|

|

|

|

|

Page |

PDF |

Code |

Abstract |

BibTeX

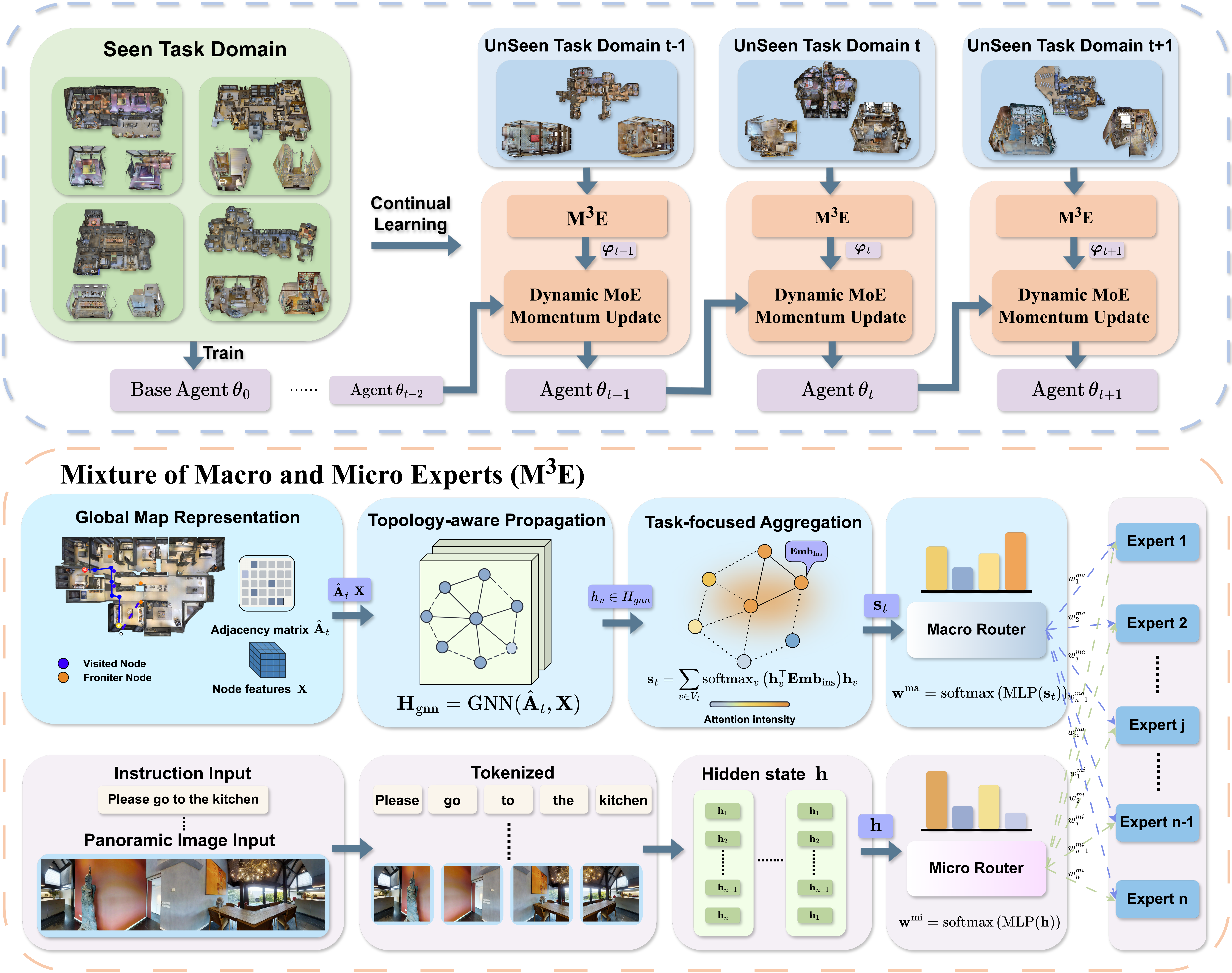

M3E proposes hierarchical Mixture-of-Experts framework for continual vision-and-language navigation, separating global scene reasoning from local instruction-vision alignment. This design helps embodied agents adapt to new environments while mitigating catastrophic forgetting across previously learned domains. Vision-and-Language Navigation (VLN) agents have shown strong capabilities in following natural language instructions. However, they often struggle to generalize across environments due to catastrophic forgetting, which limits their practical use in real-world settings where agents must continually adapt to new domains. We argue that overcoming forgetting across environments hinges on decoupling global scene reasoning from local perceptual alignment, allowing the agent to adapt to new domains while preserving specialized capabilities. To this end, we propose M3E, the Mixture of Macro and Micro Experts, an environment-aware hierarchical MoE framework for continual VLN. Our method introduces a dual-router architecture that separates navigation into two levels of reasoning. A macro-level, scene-aware router selects strategy experts based on global environmental features, while a micro-level, instance-aware router activates perception experts based on local instruction-vision alignment for step-wise decision making. To preserve knowledge across domains, we adopt a dynamic momentum update strategy that identifies expert utility in new environments and selectively updates or freezes their parameters. We evaluate M3E in a domain-incremental setting on the R2R and REVERIE datasets, where agents learn across unseen scenes without revisiting prior data. Results show that our method consistently outperforms standard fine-tuning and existing continual learning baselines in both adaptability and knowledge retention, offering a parameter-efficient solution for building generalizable embodied agents. |

|

|

|

|

|

|

|

Simulation Development

Limited Real-Robot Verification

|

In this competition, we developed the navigation and grasping pipeline primarily in simulation, and transferred it to the physical robot with only a limited number of remote real-robot verification runs. This sim-first workflow directly matched the goal of the Sim2Real challenge: transferring policies developed in simulation to a physical robot under limited real-world trials, and ultimately helped our team place 2nd out of 102 teams. |

|

Autonomous Match Footage

Sentry Detection and Tracking Demo

|

This competition featured fully autonomous robotic shooting battles on the official RoboMaster platform, where robots had to perceive battlefield conditions, make tactical decisions, plan motion, and attack opponents by launching projectiles. I developed the complete sentry target detection and tracking module to provide target positions for autonomous engagement, and optimized the robot's vision-based targeting system for more reliable shooting performance. |

|

|

| Scholarships |

National Scholarship (Top 1%) First-Class University Scholarship (Awarded multiple times, Top 2%) |

| Honors |

Outstanding Communist Party Member Outstanding Student Leader Outstanding Undergraduate Thesis |